Well, I misjudged things a bit, and though when I wrote my post yesterday I didn’t realize it, I had developed blisters on my feet from my long walking‒especially the right one, on which I had been wearing a spandex brace (prophylactically*‒I hadn’t yet been having any ankle problems, but wanted to avoid them if possible). So, today, I am not walking, at least not to the train/work.

I have realized that topical lidocaine creams, such as the max strength versions of “Icy Hot”, dull the irritation of blisters. That’s nice to know, in a pinch, though I don’t know if it would dull the pain of a pinch; it seems only to work with superficial pain, not deeper pain. Curiously, it also seems to dull some of the local signs and effects of inflammation (though Ibuprofen contributed to that). Don’t worry, I’m not expecting to cover up my pain and forget about it. That doesn’t seem doable. I’ve tried.

If I could slather lidocaine all over my body and thus numb all my pain, believe me, I would do it. But I always hit a wall beyond which the numbing doesn’t reach. Heck, I’ve had multiple steroid/lidocaine epidural injections and they didn’t seem to do anything to my pain, even temporarily.

I should probably study up on the nature of congenital insensitivity to pain, just to see if the metabolic pathways involved in the condition shed any light on the sorts of things that might make a person have their pain sense shut off. Mind you, given the nature of that disorder**, I suspect that its effects come about through some aberrant development of the nervous system, not by the presence (or absence) of some neurotransmitter.

If memory serves, the saliva of the vampire bat has significant pain-reducing as well as anticoagulant properties. I’ve heard all my life about people thinking it would be good to investigate as a source of potential powerful analgesics, but nothing has come of it, as far as I’m aware. It wouldn’t be all that hard to separate out the molecules in vampire bat saliva and examine them and try to replicate them. Heck, if you can figure out the bat’s biochemical process for making the molecules, you could develop transgenic bacteria that could produce the substance en masse, like how replacement thyroid hormone is made.

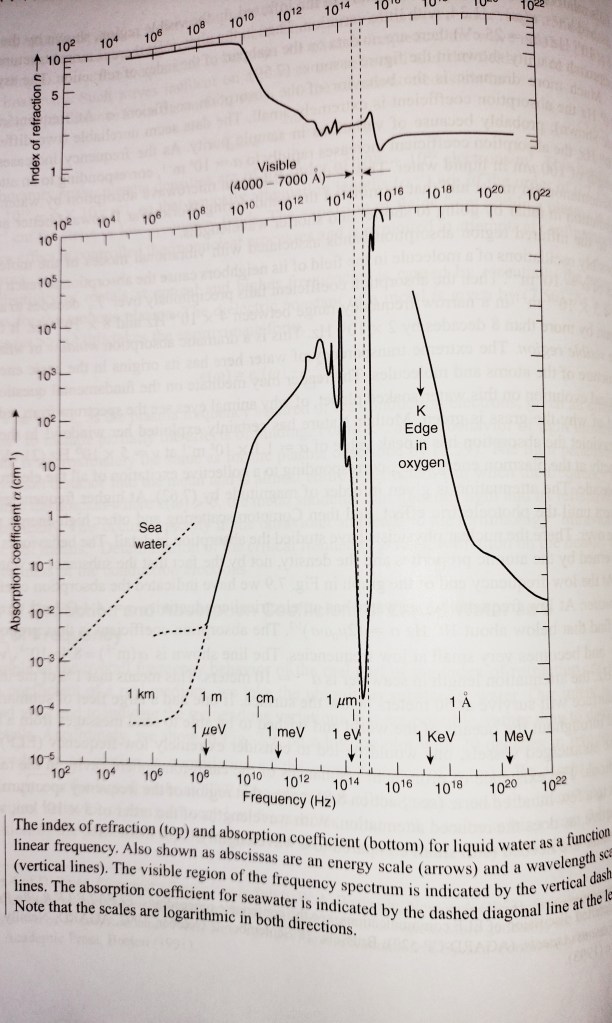

No, either there were unforeseen difficulties with using the vampire bat’s saliva analgesic, or no one was interested in doing the research (which seems unlikely but is not impossible), or “big pharma” has blocked the research because it would interfere with the sales of opioids and NSAIDs and so on (see picture below for an example of such interference). I would like to think that’s unlikely; after all, there would be tremendous potential for legitimate profit in a revolutionary new pain treatment.

Still, if it turned out that anyone in a big drug company or companies did block research into such a potential pain killer, then all the people involved would need to be strapped to tables and have all their joints and other “tender areas”, like genitals and nipples and lips and eyes, injected with some combination of‒for instance‒capsaicin and gympie-gympie leaf extract and fire ant venom, with some uric acid crystals*** thrown in for good measure. Oh, and also they should be given constant, powerful stimulants so that they cannot escape their pain by losing consciousness.

That’s if I don’t think of anything even better to do to them.

Obviously, I take pain treatment seriously. That should come as no surprise, given my personal, decades-long chronic pain and my own having gone to prison for trying (naively) to treat other people’s pain, only to be thrown under the bus by people who were taking advantage of my naïveté. I have very little patience for those who would interfere with other people reducing their pain and suffering, or who would make light of the suffering of innocent people.

Mind you, though I think vindictive thoughts and entertain vindictive fantasies, I would probably (like a moron and a sucker) feel pity even for people who had done such horrible deeds, and I would probably end their lives with minimal pain.

I would not feel bad about that though. People who willfully engender greater suffering in others for their own short-term (or long-term) profit, whatever form that might take (unless it is truly and honestly and reasonably something they perceive to be an emergency or an absolute survival need) are more than worthy of being erased from existence. And while it might be reasonable for those who knew them to miss them, they would not deserve to be mourned.

Look at me, getting all murderously vindictive about purely imaginary people, when there are so many real people who are thoroughly deserving of such animus. But, anyway, that’s enough of this weird-ass blog post for today. I’ll let you go to enjoy something more wholesome. Please have a good day if you’re able.

*I am pleased to note that my right ankle is in no danger of an unwanted pregnancy.

**And yes, it is a disorder, not just a “difference”, because it significantly reduces the survival and thrival of people who have it.

***Look them up; they’re related to gout.