It’s Friday again, at last, and this is indeed the final day of the work week for me. I am not expected to work tomorrow, and I think that even if they decided they were going to hold the office open tomorrow, I would not go in. I am too tired and dispirited to once again throw myself into the gears of the machine just because other people want me to do it*. Honestly, I feel it’s more likely that I’ll throw myself into real machinery than that I will go to work tomorrow.

Speaking of such throwing, as I was leaving the train yesterday evening, I found myself looking under the engine, seeing where the wheels meet the tracks, and wondering if I would have the guts just to lay my head across the track‒my neck, really‒and let myself be run over. It would be a quick death, I suspect.

I don’t think I have the guts, though, not right now. Also, it would be rude to screw up people’s commutes. But it does carry a weird kind of perverse attraction.

Nothing else of interest is happening, really. Well, perhaps one might concede that there are many interesting things happening, in the sense of the old curse, “may you live in interesting times”. Unfortunately, even those types of interesting things that are happening are so…well, almost so trite, so pathetic, so contemptible, so predictable, so “been done already”. None of the weird, would-be interesting, things that are happening are impressive in any sense.

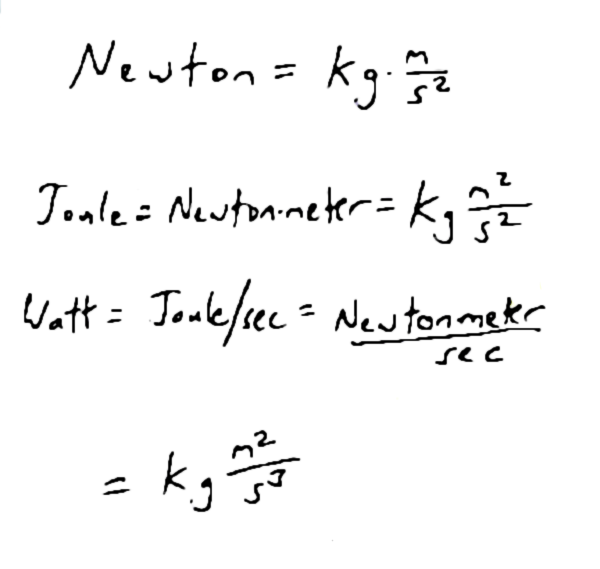

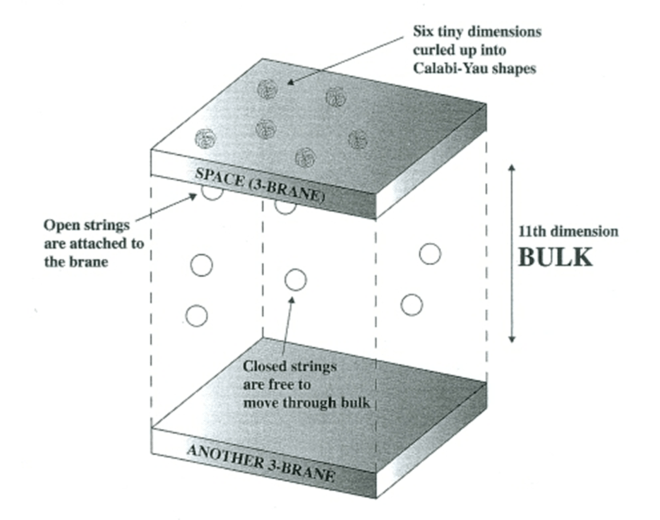

Okay, well, I’ll concede the relative interest and impressiveness of the Artemis II trip around the Moon recently. It would be more interesting if it hadn’t been something we’d done literally before I was born (3 months before, to the date, for Apollo 11’s landing), using computer systems that were‒to use the most conservative definition‒28 iterations of Moore’s Law ago. So, literally, by more (ha) than one measure of that “law”, we are at least 2 to the 28th times as advanced, computationally, as we were when we first went to the moon. That’s conservative, because I’ve heard descriptions of Moore’s Law that put the doubling time at 18 months, in which case there would be about 37 doubling times since then.

For those of you for whom exponentials don’t carry quite the visceral impact they ought to carry, 2 to the 28th power is 268,435,456. So, by more conservative, every-two-year characterizations of Moore’s Law, our current computational powers are more than 268 million times what they were in 1969. By less conservative estimates they are 137,438,953,472 times, so more than 137 billion times as advanced.

To be fair, it’s just computer tech that has advanced like that. The process of engineering rockets hasn’t improved to the same degree, because that’s a large-scale engineering thing, and is more constrained by the rate at which one can directly interrogate nature and build and test technology. Still, we went from Kitty Hawk to the Moon in about two thirds of a century, but in the more than half a century since, we’ve certainly not extended that streak much.

Okay, to be fair, we got pretty good at sending out space probes and such. Even then, though, our most distant and still most impressive probes were launched in the late seventies. There have been some quite impressive things since, and I intend no shade to be thrown at them. That Pluto thing was very impressive, as are almost all of the Mars missions and the probes sent to other planets (on the other hand, the ISS isn’t that much more impressive than Skylab was).

The things that have improved significantly have largely done so solely because the increased capacity of computers has assisted in modeling and, well, computing things. So, rocket science has improved to the degree that computer science has improved, divided by the fraction of that improvement that cannot make a difference in how well rockets can be made. Something like that, anyway.

Yeah, rocket science hasn’t advanced much in my lifetime. Brain surgery has done a bit better, but not as much better as one might have reasonably expected.

Then again, we’ve certainly improved our ability to make memes and now AI images and videos to make fun of people and express our own loyalties or outrages. Yes, in a real sense, many of our greatest advances in recent decades have been improvements in our ability to hurl feces at each other like the monkeys we all are. We appear to be more engaged by such shit-flinging even then we are by sex, which seems mind-boggling.

I say “we” but that broad description does not apply to everyone. Some people are still more interested in sex. At first glance, that would seem to be the more evolutionarily stable of the approaches, but it’s an empirical question, so we can really just wait and see which, if either, of the two tendencies prevails in the long run.

To paraphrase Dave Barry, I myself plan to be dead.

Anyway, that’s about all I have to say for this week. I don’t mean to make a post tomorrow, but of course, as always, that is barring the unforeseen.

I hope you have a good weekend.

*Okay, to be fair, that’s not really the reason. I do it because I get paid.