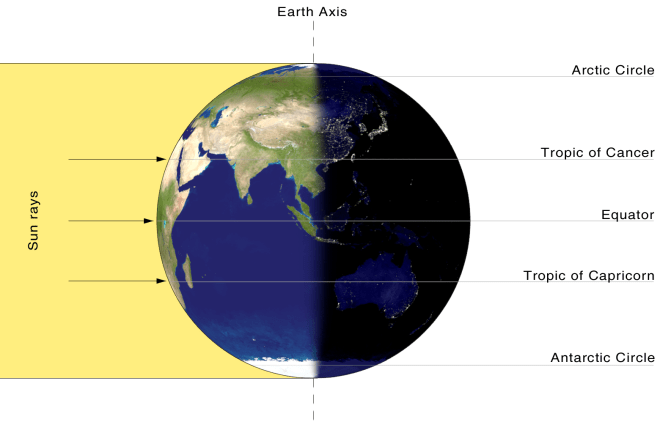

It’s Friday again. But it’s not just any Friday‒it’s the Vernal Equinox, the day when the line between the Earth and the Sun is orthogonal to the line of the axis of the Earth, and so the day and the night will be (effectively) of equal length. This is more fun in some ways than the solstices, because it’s the same for everyone, northern and southern hemispheres.

Of course, in the north it’s officially the Vernal Equinox, heralding the beginning of spring, whereas in the south it heralds the beginning of autumn. I don’t know, however, if it is officially called the Autumnal Equinox in the south. Probably it is. After all, I’m sure they have their “official” winter solstice on what is “our” summer solstice and vice versa. It would be a bit perverse for them to do otherwise.

It’s somewhat interesting to note, as Neil DeGrasse Tyson has pointed out with some ardor, that since, for instance, winter officially begins on the “shortest”* day of the year, the days actually get longer and longer through the winter (and the opposite happens in summer), until finally, on the Vernal Equinox, they break even with it and then daytime passes the night.

I wonder what Zeno would say about that race.

On a different topic, it’s quite rainy here this morning, and it’s a rather chilly rain, which is mildly unusual for south Florida. It occurred to me, seeing just how sloppy it is here at the train station, that I hope it will not be so rainy at my destination. What’s interesting about that is that it may not be rainy at all there, at work. And yet, it could still be raining heavily down here in Hollywood.

In the modern world, weather can seem to change much more rapidly than it really does because we travel through the weather, whereas throughout all of our ancestral time we would merely have seen the weather passing over us. It can give a somewhat misleading impression of how quickly the weather changes, even in Florida, where it can be raining on one side of a street and dry on the other**.

I recall when visiting my grandparents as a child, that there were times we would all be going somewhere in the car, and as we went along it would start to rain heavily, all of a sudden‒and then, just as suddenly, as we went along, it would stop. And then it would suddenly start again, and then stop again, and so on.

But even in south Florida (or, well, west central Florida back then) the weather doesn’t change like that if you’re sitting still. It changes quite rapidly compared to many other places, but not the way it seems to do when one is traveling in a modern vehicle.

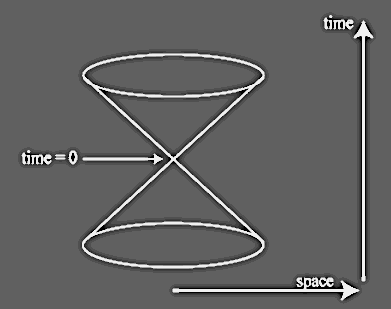

For some reason, I feel as though there’s an analogy or insight available here with respect to special and possibly general relativity, but I don’t feel like trying to explore it right now.

I did bring my hardcover copy of General Relativity: The Theoretical Minimum, which is part of Leonard Susskind’s Theoretical Minimum series, with me when I left the office yesterday, thinking I might read it while on the train last night. I did not read it. There are too many distractions, it seems, for me to be able simply to flip my attention into focus on that, however much I really am interested in it. It’s frustrating.

I have read part of it, mind you, as well as parts of the other Theoretical Minimum series. I have all of them in both physical copies and on Kindle, so really, I didn’t need to bring the physical book. But it is a lovely hardcover edition, and I hoped that might make me more likely to read it, since reading a nice hardcover is much more pleasant than reading a Kindle book on one’s phone, though that can still be fun.

I also entertain the admittedly absurd fantasy that I might be reading the hardcover copy on the train some day and some other, like-minded person (preferably an attractive woman) might notice and be interested because she is into the subject as well, and so on.

This is particularly silly as pipe dreams go, because even if such an absurd event happened, I would definitely screw the whole thing up. I tend to be quite terse when strangers try to speak with me, even if they are beautiful women.

Looking back on my life, I’m sure that there have been several occasions in which someone was expressing interest in me, but I didn’t get it or got too anxious and froze up. Sometimes I figured it out soon after, and sometimes it took longer. There are probably some cases that I never noticed at all, even in hindsight.

Of course, I was married for fifteen years, during some of which I was in medical practice, and so such interactions would have had a different character. There were sometimes more flagrant and obvious “advances” in that time, because, well…doctor. But I never had any inclination to pursue them, even when I recognized them; I’m not the kind to want to cheat on a partner. Hell, I’m not even the kind to seek a new partner two decades after my wife divorced me (though I briefly tried a little).

I wouldn’t mind a nice relationship, but I know that I am difficult to handle in many ways (I try not to be, but I am weird, and not in some charmingly popular manner), and in certain senses, my standards are high, or at least they are fairly strict. For instance, someone who doesn’t read for pleasure is unlikely to be terribly interesting to me. It’s not impossible; there are other ways for people to be interesting and smart. But not liking to read would definitely be an entry for the “con” column, not the “pro” one.

I don’t know what I’m doing, going on about such nonsense. I am not going to have any more romantic relationships in my life. I am going to die alone, as is only appropriate and to be expected for something like me. And while I won’t say “it can’t happen soon enough for my taste”***, I do really feel impatient for it. I wouldn’t say I am “eager” for it, because that’s a positive feeling. I am just quietly desperate for it, like someone trying to find an exit from a (slowly) burning building.

Anyway, that’s enough for today. I hope you have a good one, and that you have a good weekend as well. Yes, I mean you.

As for me, well, I am to be working tomorrow as far as I know, so I will be writing a blog post tomorrow, barring the unforeseen.

*Of course, this is a bit of a misleading characterization. The day is the length that it is‒roughly 24 hours‒and does not change very quickly, for which fact we should all be grateful. It’s just the length of time in a given day during which the sun is above the horizon (so to speak) that varies.

**This is not an exaggeration. I have seen it myself on many occasions. It seemed to happen more frequently in the area where my grandparents used to live (Spring Hill, north of Tampa) than it does down here‒or maybe I noticed it more because I was a kid‒but it is very real and quite impressive when it happens.

***Except to say that I won’t be saying it.