It’s Friday now (as I write this, anyway), and I think that I will have tomorrow off. But, as some of you may have noticed, the specific plans about my work Saturdays are subject to rather erratic change. It’s quite annoying; I don’t really like unexpected changes to plan. I particularly don’t like them when I don’t agree with the reasoning behind them.

Of course, our two most consistently top salespeople at the office contracted when they came aboard not to work on any weekends. And, as I said, they are consistently our best. Could there be a causal connection between those facts? Well, correlation does not necessarily imply causation, of course, but enough correlation should at least shift your credences.

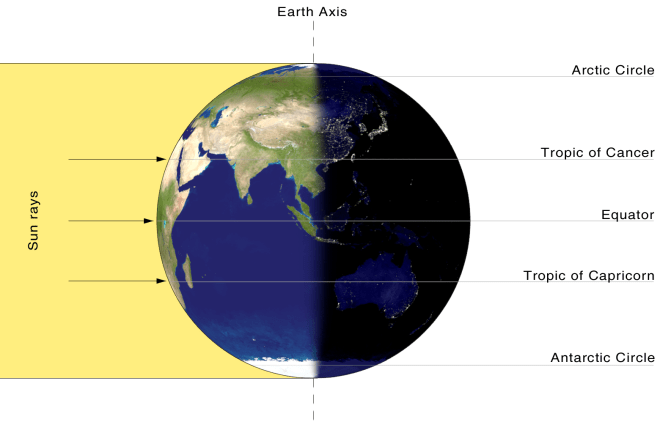

Unfortunately, humans are not naturally good at probability and statistics. This is part of why I think the subject(s) should be taught in standard education, starting quite early. Though the subject(s) can be somewhat counterintuitive, the mathematics is not really all that rarefied or difficult, and probability and statistics apply to so much of the world. On the smallest scales they seem to apply fundamentally.

Anyway, I didn’t come here today to discuss probability and statistics, though obviously I enjoy the subject(s). So, then, what have I come here today to do or to discuss? Well, now that I think about it…there is no particular subject. I don’t know why that should surprise any regular reader, let alone me.

It will probably not surprise you that I have not started playing on Babbel or Brilliant yet. I do at least look at the apps frequently throughout the day, considering using them and so on. For whatever that’s worth.

I can allow myself some excuse with Babbel, since it’s difficult to practice a language in a busy office. But there’s no such reason not to use Brilliant. Its teaching and exercises are set up in nice, granular ways, so you can do one problem then get called away by work, or whatever, and then go back.

I even don’t mind the rather hokey “experience point” system they use to reward you when you get an answer right. It’s kind of fun, but it’s not too involved or taken too seriously by the app makers (or so it seems, anyway). And I definitely have learned new things on the app in the past, and honed and renewed prior skills as well. So it’s not a waste of time by any means.

The same cannot be as confidently said* about the various apps/sites on which I no longer have accounts.

Of course, time passes‒or whatever it is that time really does‒no matter what we do, and sometimes “wasting” it can be a fulfilling choice. If we are metaphorical virtual particles then we can behave like them from time to time, not just heading directly to the next interaction, but maybe throwing out an electron-positron pair and then reabsorbing them before they could be detected, or going around the universe and coming at the interaction from backwards in time and behind, as it were, just to show off a bit.

Not everything has to be useful, at least not in too narrow a sense. Usefulness, like so many things, is in the eye of the beholder. It is certainly not a universal, general attribute of reality. So, while it may only rarely be wise to be counterproductive from one’s own point of view, there are times when it’s good‒maybe even useful, ironically‒not to worry about whether something has any point or not.

Yeah, I’m not terribly good at doing that, either. I don’t know how much of that is due to culture/upbringing and how much of it is genetic or at least neurodevelopmental. I’d guess it’s not too far from 50/50, but I would not be shocked to find the full truth surprising.

Regarding whether to worry about app usefulness or lack thereof and whether to spend time on the ones that I will have wished I spent time on, well, it’s been said that wisdom, at least a form of it, is the ability to follow your own advice (i.e., the advice you would give to someone else if they were in your circumstances). I think most people would be able to recognize that, by that particular definition, we are all quite unwise, quite often.

Okay, well, I’ll start to wrap this up. I really should not be working tomorrow, but if I do, I will almost certainly write a post. It’s quite unlikely‒I would call it less than 20% likely‒that I will work, but we shall see. You can check in if you’re “in the neighborhood”. Don’t look for my posts to be shared on Facebook or Threads anymore, but I do share them on Substack and Bluesky and TWFKAT. And you can always find them here, directly, and comment if you wish.

Have a good weekend in any case. That’s an order!

*Well, it can be said, but talk is cheap mother f#cker. Rather often, people say they are confident and act sure about situations or information that they cannot know with confidence. I always consider this unwarranted confidence to be a “red flag”, a warning sign that this person’s judgment is unreliable.