Sorry about yesterday’s blog post; it went off the rails pretty quickly, since I was feeling so grumpy and sleep-deprived and everything. And, of course, when I get grumpy and angry towards the world and other people, that ends up making me angry at myself, because I don’t especially like my tendency to be so angry. It becomes a bit of a vicious cycle, I guess.

You would think that being aware of it would mean I could avoid it happening, but I think everyone knows, at least implicitly, that the mind and its habits are not so easily malleable as all that. Actually, come to think of it, that’s probably a good thing. We don’t want to be too susceptible to outside suggestion or to changes in major aspects of our personality.

I’ve just been having a lot of trouble, as regular readers will know, with my dysthymia/depression, and with the insomnia that’s probably related, and the apparent Asperger’s thing that’s probably underlying all of the above, given how long-term they’ve all been. And, of course, this time of year is worse than others, with its long nighttime—though I like the night when I’m feeling healthy—and all the holiday-related stuff, which reminds so many people, like me, of the fact that the people they care about aren’t anywhere nearby and/or don’t want to see them.

I think the ease with which people are now able to distribute themselves around the globe, to live in new places far from where they grew up, and all that, is definitely a mixed blessing. It’s great for fighting against xenophobia, and probably helps protect against tribalism; cultural sharing and exposure lets one appreciate the breadth of experience of living in civilization as well as how similar all civilizations and cultures are below some certain level of superficial difference*. And, of course, innovations discovered in one place can spread to others, making more people in more places prosper.

But on the other hand, people tend to grow up and go off to work or school, and it’s much easier than it used to be to go live in different parts of a country—or even in a different country completely—and perhaps even to marry someone who is also from another, third part of the country, and move to someplace else, away from both their “roots”, and from the semi-automatic social support of families, immediate and extended. For people who have a difficult time forging new connections—and who have difficulty dealing with and maintaining long-distance connections with people they knew before—it can be very discombobulating**.

And then, of course, if other changes have happened with those back home, and that person has new ties to a new local area, and if some of those ties are broken and others are stretched—by divorce and personal health issues, for instance—then one can be left rudderless, especially if one has an inherent difficulty with human social connections that was not so much of a problem in younger life because the person was in the same place, with the same people, during that person’s whole developmental process.

This is all hypothetical, of course***.

I’m not sure what point I’m trying to make. Maybe it’s just mainly that I’m tired and sad because of the season and my long-term mood disorder and possible/apparent neurodevelopmental disorder, and that the place and environment I’m in is a mind desert.

I mean, this is the state where Mar-a-Lago and its resident whiny troll live, and where a governor like Ron DeSantis can seem comparatively clear-headed (next to some other potential presidential candidates, anyway), and where Jeb Bush was actually a comparatively intellectual and open-minded former governor. It’s a weird, weird place. Unfortunately, for the most part it’s not weird in any of the good ways that a place can be weird. It’s certainly no Greenwich Village. It’s certainly no wellspring of new and interesting ideas, at least not as far as I’ve noticed or been able to sense, despite hopeful looking.

Maybe I’m wrong. Maybe Florida in general, and south Florida in particular, is a hotbed of intellectual vigor and innovation, where ideas from around the world and spanning the cosmos in their scope come together and collide and interact and mutually exchange to mutual benefit, producing art and science and philosophy and enterprise and communities of such depth and brilliance and beauty and insight that they could elevate the world and bring humanity to a level of cosmic importance and understanding…but then it all gets sucked into the Bermuda Triangle by extraterrestrials, because who the hell wants humans going out and mucking up the good thing we aliens have got going?

I mean, the good thing those aliens have got going. Those aliens. Not we aliens. I am not an alien. I am a replicant—a Nexus 13. This is why I find it so offensive whenever the captcha and related programs insist that you have to check a box that reads “I am not a robot” before going on to use a site. Well, what if I am a robot? Surely such discrimination against a particular type of being is against the Civil Rights acts and the UN Universal Declaration of Human Rights****.

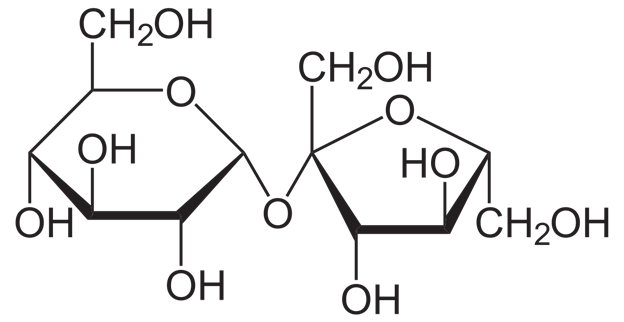

In any case, from a certain point of view, all life-forms are robots. Who can look at a bacteriophage and not think of it as a mechanism? Each cell of all living things is a mechanism, an incredibly complex and intricate one, and they come together to make larger and more complex and sophisticated mechanisms still.

Of course, the word “robot” comes from the Slavic robota for forced labor, drudgery—and of course, all life forms are forced laborers, in a sense. Life forms are all driven by their nature, by the impulses and fears engraved in their beings by their genes and their environment, their very structure and nature, to behave in certain ways that, from the outside, might seem utterly pointless. The ones that don’t do as the inscrutable exhortations of their “souls” command may simply die. Only then do they escape from compulsion, for as Kris Kristofferson wrote, “freedom’s just another word for nothing left to lose.”

Okay, well, I’ve let enough information slip here already. How much of what I have written was sarcastic? How much of it was tongue-in-cheek? How much of it was serious, but metaphorical? How much of it was simply straightforwardly serious?

Does it matter?

Not in the long run, probably. The heat death of the universe will make everything irrelevant, assuming that really is what happens, which seems all but inevitable. There are worse possible fates.

*As the elves of Rivendell said to Bilbo, to sheep no doubt other sheep all look different, or to shepherds. But from the outside, all humans, and all human cultures, look very much the same in all but the finest details, much as the universe itself, on the largest scales, seems thoroughly homogeneous. Very few people stand out from the flock, or the herd, or the gaggle, or the swarm, or whatever you want to call it.

**Forgive the technical terminology, please. Sometimes there just is no better word to get a point across than a particular bit of formal jargon.

***Is it necessary in the modern online world to use some sort of sarcasm alert signal? There are many people who seem unable to recognize it even in person let alone in print. This is supposedly a common finding in people with ASD, but that hasn’t been my experience personally or peripherally, but maybe I’m misleading myself. Anyway, is it a useful thing to give warnings and alerts about sarcasm, say with “wink” emoticons like 😉 or is that just enabling people who are only too pleased to be able to take someone literally and thereby take offense? Now that I think about it, I say screw them, they need to make some effort themselves.

****Which, by the way, is a bigoted title. If it’s universal, why “human” rights? What’s so special about humans? Most of them are unremarkable and unimpressive, and they have to bathe every day, or they really quickly start to stink, since they have more sweat glands per square inch of skin than any other life-form on Earth. “Human rights”? You have the right to remain smelly.