It’s Monday again; indeed, it is the last Monday in March in 2026 (AD/CE), for whatever that’s worth. This Monday shall never come again.

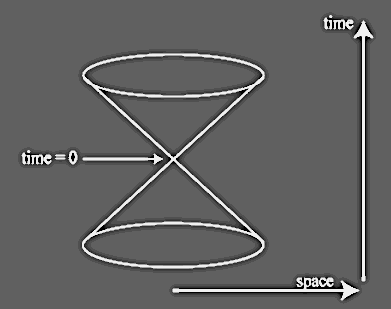

Then again, of course, no Monday shall ever come again. Such is the nature of time. This is one of the facts that makes senseless the expression “That’s a [measure of time] I’m not gonna get back”. Well, duh! You never get any of your experienced amounts of time back. That’s the nature of time, and the nature of its directionality, dependent upon the second law of thermodynamics.

Even if one could rewind time, one would not “get a moment back” the way people talk about it. If, like the events of a movie or other video story, one could rewind life, it would not be you (the self who spoke of getting the moment back) who would experience the events anew. It would just be a return to an earlier state, in which you would again be experiencing all the same events, not merely as if for the first time, but actually for the first time. The posterior events would be erased for you as you traveled back.

It’s not like playing a video game where you can “regenerate” at your most recent save point, but you can remember what happened to your character before it “died” so that you can learn from your mistakes. There is no one playing your character (i.e., you) and able to learn from a repeated past. You are not the player of the game, you are the character. You are part of the game. You are part of the movie, not watching it from outside. If it resets, you reset; if it rewinds, you rewind, and all memory of any events that happened disappear along with the future.

Whether or not you will repeat the same events, like the characters of a movie/show, or if you may do something different, like a video game character, is less clear, but it doesn’t much matter. You are still going through each moment once, effectively, and you can only learn from mistakes to affect your behavior in the future. If your mistake kills you, you’re just dead.

Even if time were a closed loop‒if the future of the universe wraps around and becomes “the past” again, forming a closed and fixed structure, as appears to be possible in principle according to General Relativity‒you won’t get to experience it as happening again. Each time, you will experience reality for the first time.

Just as there is no fixed self looking out from behind your mind, there is no external rememberer hovering over your reality, able to experience your experiences for the first time but as if not for the first time. You are a phenomenon within reality, not a sojourner through reality that accumulates knowledge that could be used in reliving the past, but better.

If you could rewind yourself except for your mind, somehow retaining your memory of “the future”, that would not be truly returning to the past. Rather, it becomes the next set of events in your future. This demands an answer to the question of how it could be possible for you to become your earlier self and yet remember your later self, since your memories are functions of your brain.

This is what makes things like Alzheimer’s and other forms of dementia or brain damage so tragic‒they literally are injuries to what makes us ourselves. If you lose all memories of your past, then in a very real sense, the person you were is already dead.

Of course, even in healthy states, without brain damage, your past self is still “dead” with every new moment that arrives. Every time you sleep and then wake up, it may as well be that you have died and then been recreated in the morning, just with implanted memories from the previous person, the one who died. There would be no way for you to know if there is no difference in your brain and the rest of your body. Indeed, it’s in principle possible that this actually happens with each passing moment, or even each passing Planck time.

Only the past can be remembered. Only the future, even in principle, can be planned and affected. And only the ever-moving present can be experienced. There is, of course, a continuity that is required for us to have any sense of a unified personhood at all, but as Sam Harris has pointed out (more than once) your memories of your past are merely thoughts in your mind in the present moment, as are your plans for the future.

So it really can make sense to “get over yourself”, in more than one way. It’s worth recognizing that you’re mortal and‒whatever you may believe‒as far as we know, death is the end, and all that you were will be gone after that. But it’s also worth recognizing that, in a nontrivial sense, each day all that you were the day before is already gone.

Still, though you are only existing for any given present moment, memory at least allows for us to learn and hopefully do better in the future than we would have if we didn’t have memory. That’s why memory is a trait that gets selected for and is evolutionarily stable: because its presence makes creatures with that trait or attribute more likely to survive and reproduce than those that do not have it, ceteris paribus.

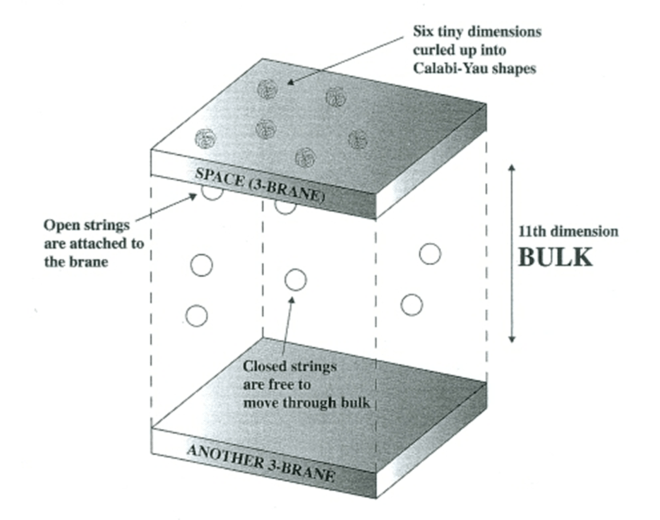

As with most such subject-specific blog posts, I could go on and on about this. A thousand (or, well, a lot of) other thoughts arise that could be expressed as I write what I do write. But I have finite space and finite time (even if spacetime is infinite) in which to write this post, so I’ll stop here for the moment.

Welcome to the new week. I hope it’s a good one for you. Heck, I hope it’s a good one for everyone, even “bad” people (with the caveat that, “a good one” entails such people becoming better than they presently are).